How AI reduces bias in recruitment for HR leaders

Many HR professionals worry that artificial intelligence introduces new biases into recruitment. The reality is quite different. When properly designed and implemented, AI systems can significantly reduce unconscious bias that affects human decision making. This article explores how AI identifies hidden prejudices in hiring processes, the specific techniques that ensure fairness, and practical steps HR leaders in the UK and Spain can take to implement bias reducing AI solutions in their organisations. You’ll discover evidence based strategies to create more equitable recruitment outcomes whilst maintaining efficiency and compliance.

Table of Contents

- Understanding Bias In Recruitment And AI’s Role

- How AI Systems Reduce Bias: Techniques And Safeguards

- Real World Applications And Benefits Of AI For Bias Reduction In Recruitment

- Implementing AI Bias Reduction In Your Recruitment Process

- Discover AI Tools For Bias Free Recruitment

Key takeaways

| Point | Details |

|---|---|

| AI enables objective decisions | Data driven analysis removes subjective judgements that lead to unconscious bias in candidate evaluation |

| Proper design prevents bias | Balanced datasets, fairness algorithms, and transparent processes ensure AI tools reduce rather than replicate existing prejudices |

| Human oversight remains essential | AI works best when combined with trained HR professionals who monitor outputs and maintain ethical standards |

| Implementation requires strategy | Successful adoption involves vendor evaluation, staff training, continuous monitoring, and compliance with UK and Spain regulations |

| Measurable diversity improvements | Organisations using AI screening report faster hiring timelines and demonstrably more diverse candidate pools |

Understanding bias in recruitment and AI’s role

Recruitment bias occurs when subjective factors influence hiring decisions beyond candidate qualifications and potential. Common types include unconscious bias, where recruiters unknowingly favour candidates who share similar backgrounds, affinity bias that draws us towards people like ourselves, and confirmation bias that makes us seek information supporting initial impressions. These prejudices harm organisations by limiting diversity, reducing innovation, and creating legal risks under equality legislation.

Traditional recruitment methods rely heavily on human judgement, making them vulnerable to these cognitive shortcuts. A recruiter might spend just six seconds reviewing a CV, making snap decisions based on name, education, or previous employers rather than actual capabilities. These quick assessments often reflect societal stereotypes rather than job requirements.

AI can identify patterns of unconscious bias in recruitment data to help HR address issues. Machine learning algorithms analyse thousands of hiring decisions to spot correlations between candidate characteristics and selection outcomes. This objective analysis reveals biases that human recruiters cannot easily detect in their own behaviour.

AI systems offer several advantages for uncovering hidden prejudices:

- Consistent evaluation criteria applied to every candidate without fatigue or mood variations

- Pattern recognition across large datasets that humans cannot process manually

- Removal of identifying information that triggers unconscious associations

- Quantifiable metrics that track fairness improvements over time

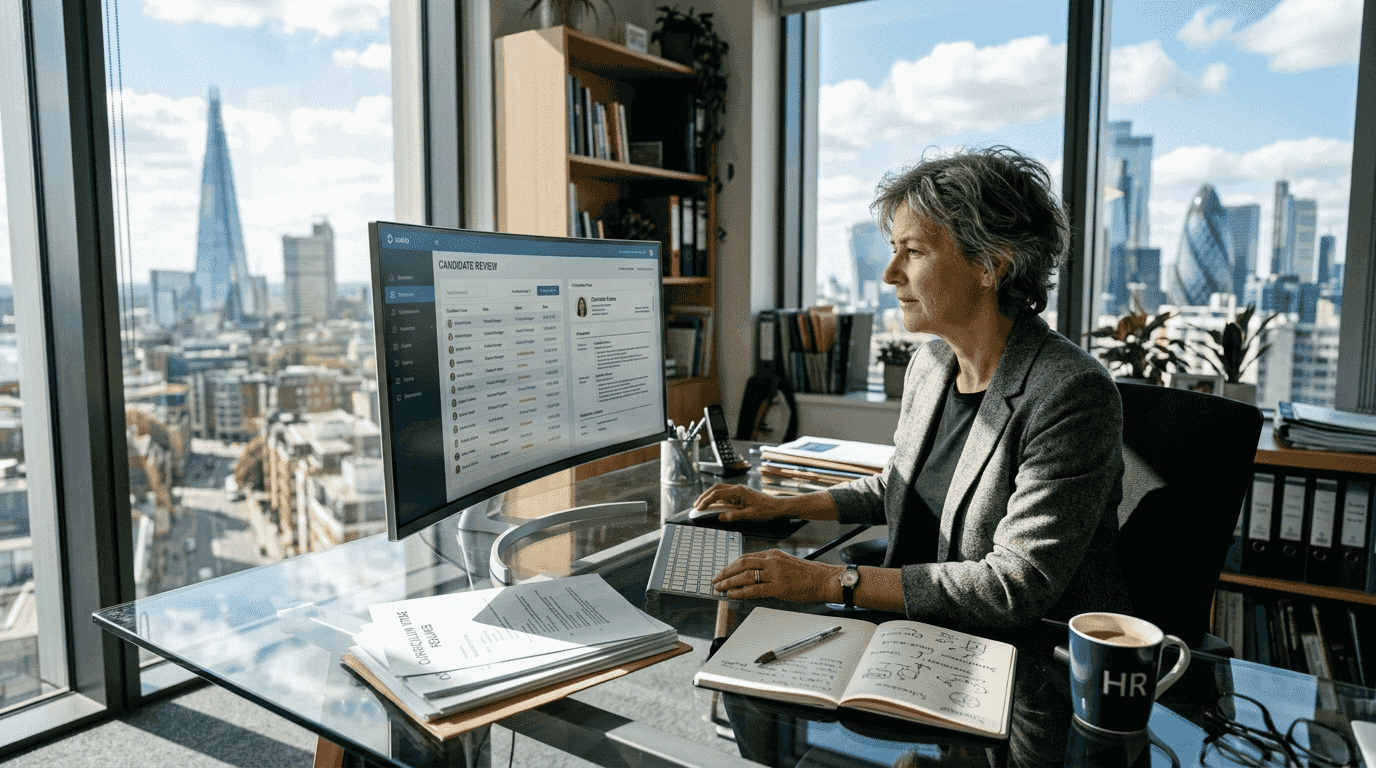

The technology complements rather than replaces human recruiters. AI handles initial screening and bias detection, whilst HR professionals make final decisions and ensure candidates receive personalised attention. This partnership combines algorithmic objectivity with human empathy and contextual understanding.

How AI systems reduce bias: techniques and safeguards

Effective AI recruitment platforms employ specific techniques to detect and mitigate bias throughout the hiring process. Anonymised data removes names, ages, genders, and other demographic information from initial screening. Algorithms evaluate candidates based purely on skills, experience, and assessment performance rather than characteristics unrelated to job success.

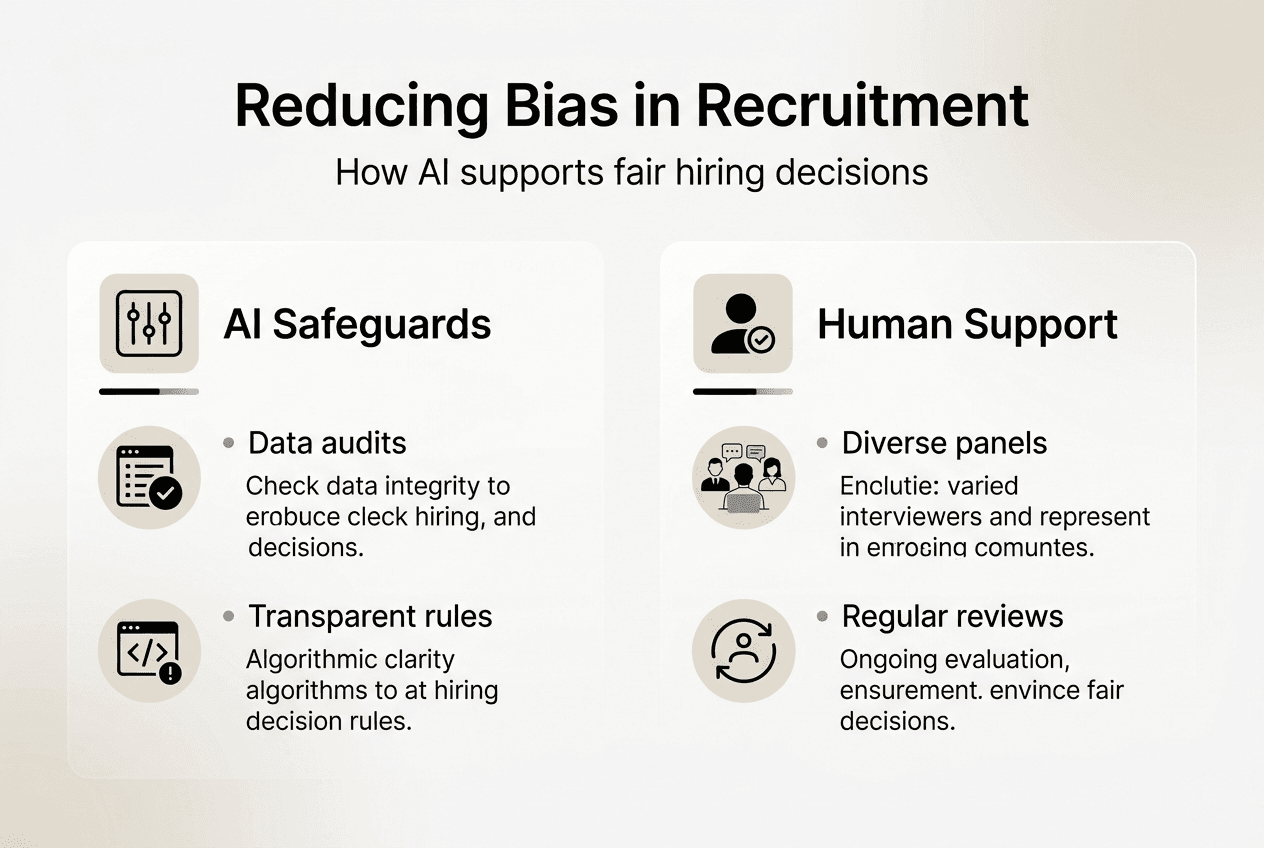

Balanced training datasets prevent AI from learning existing organisational biases. If historical hiring data shows preference for certain universities or backgrounds, developers must correct these imbalances before training algorithms. Fairness algorithms then monitor outputs to ensure no protected groups face systematic disadvantage.

Ethical AI transparency improves bias detection and trust in recruitment platforms. Explainable AI systems show which factors influenced candidate rankings, allowing HR teams to verify decisions align with job requirements. This visibility prevents black box effects where nobody understands why the system made particular choices.

| Traditional recruitment | AI enhanced recruitment |

|---|---|

| CV screening based on subjective impressions | Anonymised skills assessment with standardised scoring |

| Interview questions vary between candidates | Structured interviews with consistent evaluation criteria |

| Bias detection relies on individual awareness | Algorithmic monitoring identifies patterns across all decisions |

| Limited accountability for unfair outcomes | Transparent audit trails document selection rationale |

Several safeguards ensure AI systems maintain fairness over time. Regular audits examine algorithm outputs for disparate impact on different demographic groups. Diverse development teams bring multiple perspectives to system design, catching potential biases others might miss. Continuous monitoring tracks whether AI recommendations lead to equitable hiring outcomes in practice.

Understanding what makes screening unbiased helps organisations evaluate potential AI vendors. Look for providers who publish fairness metrics, undergo independent audits, and actively work to eliminate bias from their systems.

Pro Tip: Create a diverse review panel that regularly examines AI outputs alongside hiring outcomes. Include HR professionals, department managers, and employee representatives to catch emerging biases before they become systemic problems.

Real world applications and benefits of AI for bias reduction in recruitment

Companies using AI for candidate screening experienced faster, fairer hiring with reduced bias. One multinational technology firm implemented AI powered CV screening and saw their interview shortlists become 40% more diverse within six months. The system evaluated candidates on technical skills and problem solving abilities rather than prestigious university names or traditional career paths.

Another organisation in the financial sector combined AI interviews with human assessment. The technology asked every candidate identical questions and evaluated responses based on content rather than delivery style or accent. This standardisation removed interviewer preferences that previously favoured certain communication styles. The company reported a 35% reduction in time to hire whilst simultaneously improving diversity metrics.

Practical applications where AI reduces recruitment bias include:

- Skills based matching that focuses on capabilities rather than credentials or background

- Blind screening that removes demographic information from initial evaluation

- Structured AI interviews ensuring consistent candidate assessment

- Predictive analytics identifying which qualifications actually predict job success

- Real time bias alerts when human reviewers show patterns of unfair evaluation

Measurable benefits extend beyond fairness. Organisations report faster hiring cycles because AI handles initial screening efficiently. Candidate quality improves when selection focuses on relevant skills rather than superficial characteristics. Employee retention increases because better matching reduces early turnover from poor cultural fit.

The role of AI in recruitment continues evolving as technology advances. Modern systems can assess soft skills through video analysis, evaluate cultural alignment without demographic proxies, and predict candidate success based on behaviour patterns rather than background characteristics.

Pro Tip: Combine AI screening with structured interviews where human recruiters ask predetermined questions in fixed order. This hybrid approach leverages algorithmic objectivity whilst maintaining personal connection, delivering the strongest bias reduction results.

HR leaders should track specific metrics to verify AI effectiveness. Monitor diversity statistics at each hiring stage, measure time to hire across different candidate groups, and survey applicants about their experience. These data points reveal whether AI implementations achieve intended fairness goals.

Implementing AI bias reduction in your recruitment process

Successful AI adoption requires systematic planning and ongoing commitment to ethical practices. HR leaders should follow these steps to integrate bias reducing technology effectively:

- Assess current recruitment processes to identify where bias most commonly occurs and which stages would benefit most from AI intervention

- Define clear objectives for what you want AI to achieve, including specific diversity targets and fairness metrics you will track

- Research vendors thoroughly, prioritising those with proven bias reduction results, transparent algorithms, and compliance with UK and Spanish equality legislation

- Evaluate AI systems using your own historical data to verify they reduce rather than replicate existing biases in your organisation

- Train HR staff and hiring managers on how AI tools work, their limitations, and how to interpret algorithmic recommendations appropriately

- Implement data governance policies ensuring candidate information remains secure and AI systems comply with GDPR requirements

- Start with pilot programmes in one department or role type before organisation wide rollout, allowing you to refine processes and address issues

- Monitor outcomes continuously, tracking whether AI recommendations lead to more diverse hires and equitable treatment across protected characteristics

- Maintain human oversight at critical decision points, ensuring algorithms support rather than replace professional judgement

- Review and update AI systems regularly as your organisation evolves and new bias patterns emerge

Effective AI implementation requires continuous monitoring and human oversight to maintain fairness. Technology alone cannot solve bias without committed leadership and organisational culture change.

Ethical considerations must guide every implementation decision. Ensure candidates understand when AI evaluates their applications and provide human contact points for questions or concerns. Be transparent about what data you collect and how algorithms use it. Respect candidate privacy whilst gathering information needed for fair assessment.

Compliance requirements differ between UK and Spanish jurisdictions. Both countries prohibit discrimination based on protected characteristics, but specific regulations around automated decision making vary. Consult legal experts familiar with local employment law before implementing AI recruitment tools.

Understand the advantages of replacing CVs with skills based assessments. This approach removes many bias triggers whilst providing better prediction of job performance.

Collaborate closely with AI vendors throughout implementation. Reputable providers offer training, ongoing support, and regular algorithm updates. They should willingly discuss how their systems detect and prevent bias, sharing audit results and fairness metrics. Avoid vendors who cannot explain their technology or resist transparency about bias mitigation methods.

Discover AI tools for bias free recruitment

Modern AI recruitment platforms help HR leaders build fairer hiring processes whilst improving efficiency. These solutions move beyond traditional CV screening to assess candidates on actual capabilities through skills tests, cognitive assessments, and structured interviews. Technology that focuses on matching candidates based on skills rather than credentials reduces bias whilst identifying talent others overlook.

We Are Over The Moon offers an AI candidate validation platform designed specifically to reduce recruitment bias. Our system combines real assessments, company challenges, and cultural matching to evaluate candidates fairly. You can explore how our approach helps organisations build diverse teams whilst cutting hiring time. Learn more about our mission to transform recruitment through ethical AI that prioritises fairness and effectiveness.

Frequently asked questions

How does AI help identify unconscious bias in recruitment?

AI analyses patterns in hiring data revealing unconscious preferences human recruiters may not notice. The technology tracks correlations between candidate characteristics and selection outcomes across hundreds or thousands of decisions. When certain demographic groups consistently face rejection despite similar qualifications, algorithms flag these disparities for HR review. Using AI helps bring hidden bias to light for targeted interventions.

What measures ensure AI tools remain unbiased in hiring?

Regular audits of AI training data and algorithms verify systems treat all candidates fairly. Developers must examine whether historical hiring data contains biases, then correct these imbalances before training machine learning models. Inclusion of diverse stakeholder feedback during system design catches potential problems early. Transparent AI decision making processes build trust by showing which factors influenced candidate rankings.

Can AI completely eliminate human bias in recruitment?

AI reduces bias but does not replace human judgement entirely. Algorithms excel at objective evaluation of skills and qualifications, removing many sources of unconscious prejudice. However, human oversight remains essential to ensure fairness, interpret contextual factors, and make final hiring decisions. Best results come from AI and human collaboration where technology handles initial screening whilst professionals provide empathy and nuanced assessment.

How can HR leaders implement AI solutions effectively in 2026?

Choose reputable AI vendors with demonstrated ethical commitments and published fairness metrics. Train staff thoroughly on how to interview with AI tools, ensuring they understand both capabilities and limitations. Continuously monitor AI outcomes for fairness across different candidate groups, adjusting systems when disparities emerge. Start with pilot programmes before organisation wide rollout, allowing time to refine processes and build confidence.

Recommended

- Benefits of AI Assessment: Boosting Recruitment Quality | We Are Over The Moon

- Role of AI in Recruitment Agency Success | We Are Over The Moon

- How to Interview with AI: Cut Hiring Time by 35% in 2026 | We Are Over The Moon

- AI in Recruitment – Transforming Candidate Screening and Fit | We Are Over The Moon